The Center for Distributed and Grid Computing performs research on programming models, compilers, and runtime systems for high-performance parallel computing on multicore and manycore processors and on clusters. The goal is to raise the level of abstraction at which parallel application programs can be written without incurring a significant performance penalty. The current focus is on big-data applications such computations on large graphs and hypergraphs, and on parallelizing tool-chains for electronic chip design.

Website

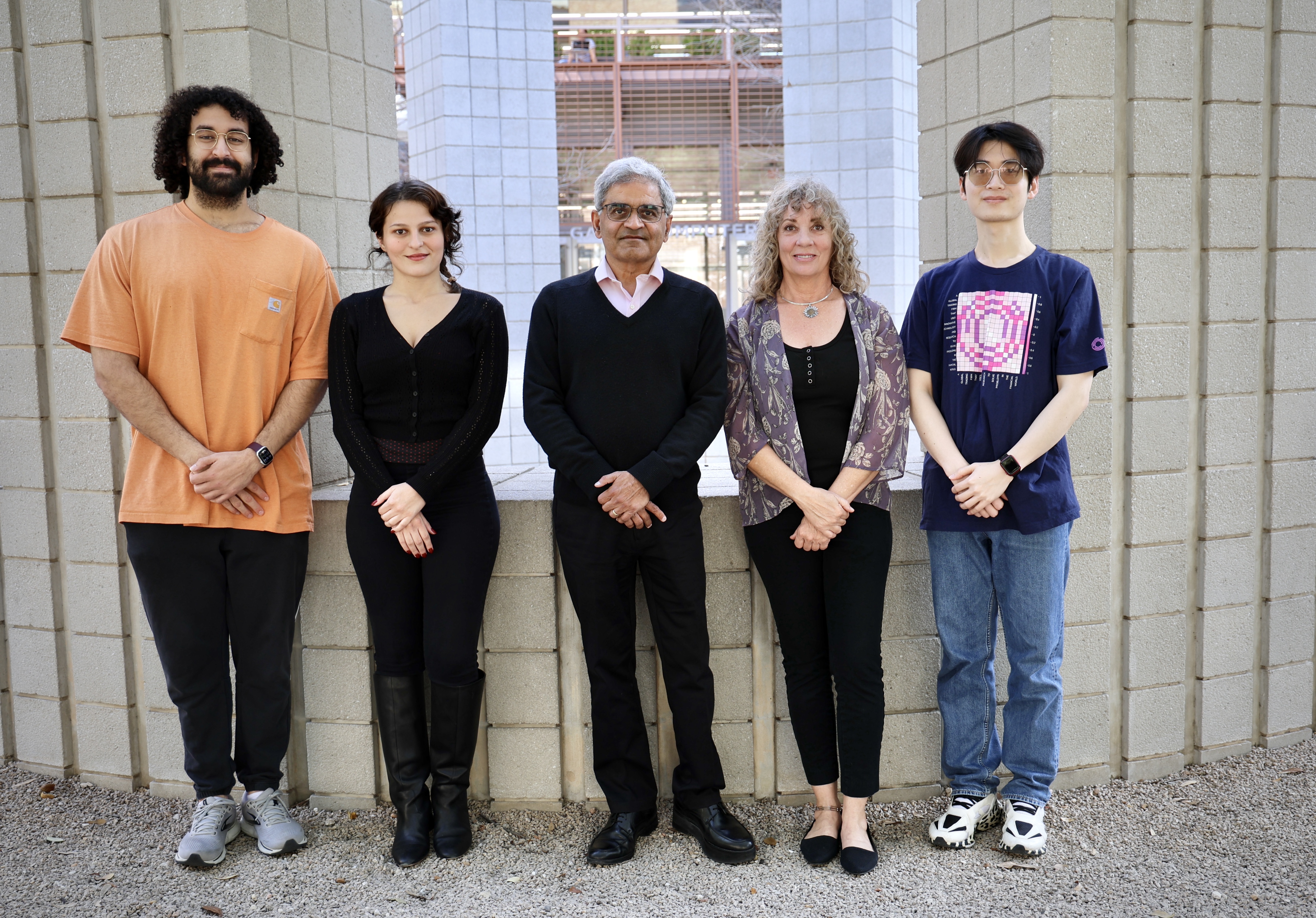

Directors

Students

Staff

Members outside the Oden Institute

Kaizhao Liang

Research at the Center is conducted in collaboration with other Oden Institute centers, the Departments of Computer Science and Electrical and Computer Engineering at The University of Texas at Austin, and with several other universities and national laboratories including MIT, Yale, the University of Illinois at Urbana-Champaign, the University of Padova in Italy, and the AGH University in Poland. Industrial collaborators include Intel, Cadence, IBM and Apple. Currently, a major research theme in the Center is the exploitation of data-parallelism in "irregular" programs that manipulate complex data structures such as trees and graphs. Data-parallelism in regular programs that manipulate arrays is well understood, and is exploited routinely in parallel programming; for example, if a program multiplies two matrices, the rows of the first matrix can be multiplied by the second matrix in parallel. Data-parallelism in irregular programs is less well understood. Examples of such algorithms include Delaunay mesh generation and refinement, data-mining algorithms for clustering and ranking, and algorithms for logic synthesis, placement and routing in chip designs. The Center is investigating language constructs, compiler technology and runtime systems for exploiting this kind of irregular data-parallelism. Research performed has shown that irregular data-parallelism manifests itself in work-list based algorithms that perform computations on elements of sorted or unsorted work-lists, and that this parallelism can be exploited using optimistic parallelization techniques.

Projects:

Title: IDEAL - An Intelligent Design Environment for Asynchronous Logic

Funding agency: DARPA

PI: Rajit Manohar, Yale Co-PI: Keshav Pingali

The IDEAL project is funded by DARPA's Electronic Resurgence Initiative (ERI) program, which seeks to build open-source tool-chains for synchronous and asynchronous chip design. Many academic and industry groups are designing new processors and accelerators for AI and ML applications, but at present, the tool-chains for designing these chips are not in the public domain since they are controlled by a handful of EDA vendors. The IDEAL project, led by Professor Rajit Manohar at Yale, is building an open-source parallel tool-chain for asynchronous chip design. This tool-chain is written on top of the Galois programming system, which has been developed at the Center for Distributed and Grid Computing. The Galois system permits the exploitation of fine-grain parallelism on multicore processors without requiring the partitioning of the circuit. IDEAL modules that have been parallelizing successfully using the Galois system include logic synthesis, static timing analysis, placement and routing. Some of these modules are now being used by other performers in the ERI project. Test chips designed using this tool-chain have been implemented in 65nm, 28nm, and 14nm technologies.

Title: The Mongo Graph Machine

Funding agency: NSF

PI: Keshav Pingali co-PI: Arvind (MIT)

Many problem domains such as machine learning and the study of social networks require the analysis of extremely large, sparse graphs with billions of nodes and hundreds of billions of edges. At present, two approaches are used to deal with such enormous graphs: in-memory computing and out-of-core computing. In the in-memory approach, the entire graph is stored in main memory for the duration of the computation; since single machines have limited memory, this approach requires the use of large clusters of machines, which can be inefficient because of the time and energy overheads of inter-machine communication. In the out-of-core approach, the graph is stored in secondary storage such as disk, and portions of the graphs are brought into memory when they are needed for computation. While out-of-core processing of large graphs avoids some of the problems of using clusters, the programming models supported by current out-of-core systems are very limited, and cannot express important algorithms such as collaborative filtering with stochastic gradient descent, which is used widely in recommendation systems. The Mongo Graph Machine (MGM) project addresses these problems as follows. The project uses NAND flash memory with FPGA-based in-store accelerators to store and process extremely large graphs. A single machine can accommodate 1 TB to 16 TBs of flash memory using current NAND technology, so the need for large clusters is eliminated. Flash-based machines, however, require significant extra computation to make efficient use of bandwidth and the architecture of general-purpose processors is not suitable for such computations. Therefore, in-store accelerators are being implemented to reorganize irregular data accesses into page-granularity data accesses required to use flash memory. To insulate application programmers from the complexity of out-of-core programming, out-of-core capability is being added to the Galois system, which has already been shown to be effective for high-level programming of graph analytics applications on distributed, heterogeneous computing platforms. Application programs will not need to be aware of whether the computation is in-memory or out-of-core, which is a significant simplification.