Past Event: CSEM Student Forum

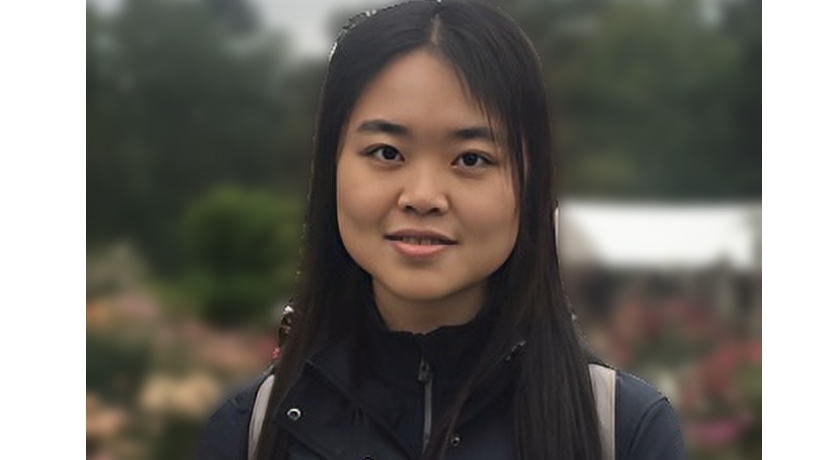

Yijun Dong, CSEM PhD Student, Oden Institute, UT Austin

12:30 – 1PM

Friday Oct 14, 2022

POB 6.304

Modern machine learning models, especially deep learning models, require a large number of training samples. Since data collection and human annotation are expensive, data augmentation has become ubiquitous in creating artificial labeled samples and improving generalization performance. This practice is corroborated by the fact that the semantics of images remain the same through simple translations like obscuring, flipping, rotation, color jitter, and rescaling. Conventional algorithms use data augmentation to expand the training data set.

Data Augmentation Consistency (DAC) regularization, as an alternative, enforces the model to output similar predictions on the original and augmented samples and has contributed to many recent state-of-the-art supervised or semi-supervised algorithms (e.g., FixMatch and AdaMatch). DAC can utilize unlabeled samples, as one can augment the training samples and enforce consistent predictions without knowing the true labels. This bypasses the limitation of the conventional algorithms that can only augment labeled samples and add them to the training set (referred to as DA-ERM). However, it is not well-understood whether DAC has additional algorithmic benefits compared to DA-ERM. We are, therefore, seeking a theoretical answer.

In this work, we first present a simple and novel analysis for linear regression with label invariant augmentations, demonstrating that data augmentation consistency (DAC) is intrinsically more efficient than empirical risk minimization on augmented data (DA-ERM). The analysis is then extended to non-linear models (e.g., neural networks). Further, we extend our analysis to misspecified augmentations (i.e., augmentations that change the labels), which again demonstrates the merit of DAC over DA-ERM. Finally, we perform experiments that make a clean and apples-to-apples comparison (i.e., with no extra modeling or data tweaks) between DAC and DA-ERM using CIFAR-100 and WideResNet; these together demonstrate the superior efficacy of DAC.

Yijun Dong is a CSEM Ph.D. student at the Oden Institute, co-advised by professors Per-Gunnar Martinsson and Rachel Ward. Before graduate school, Yijun received her B.S. degree in applied mathematics and engineering science from Emory University in 2018. Her research interests lie in randomized numerical linear algebra and theoretical machine learning.